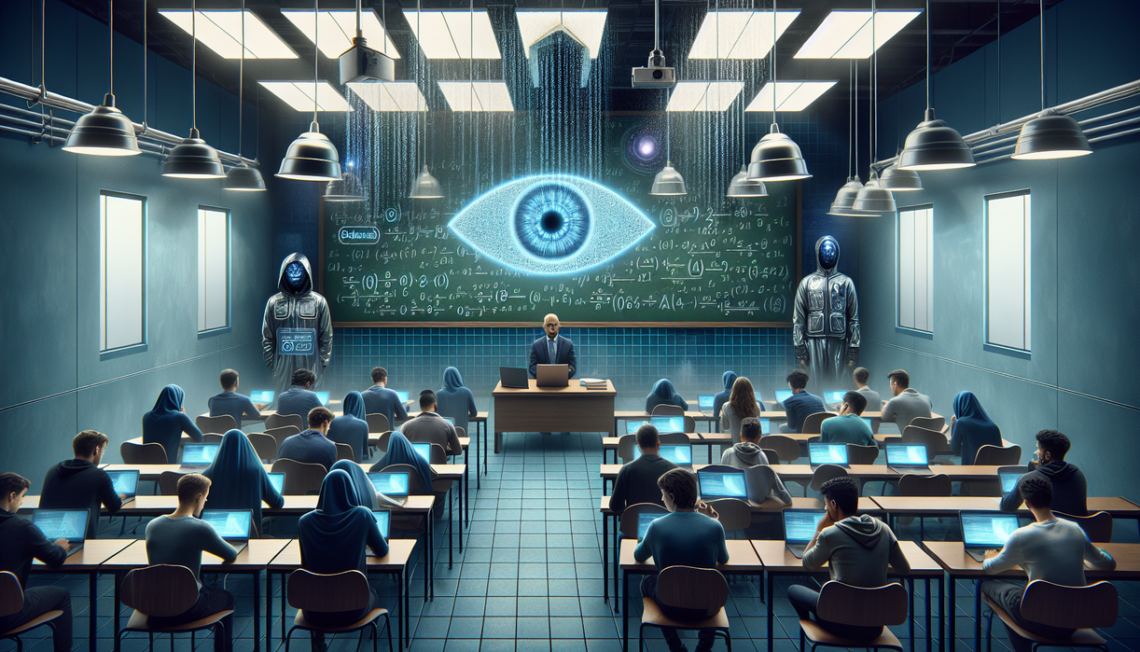

www.silkfaw.com – AI has rushed into classrooms faster than many students, teachers, and parents expected. Homework, essays, and projects can now be drafted in minutes with powerful ai tools, but this convenience arrives with a shadow: ai detectors. Schools deploy these systems to catch cheating, yet the result is a new wave of worry among learners who fear being falsely accused. Instead of feeling excited about technology, many now associate ai with risk, suspicion, and punishment.

Behind the headlines about ai revolutionizing education lies a quieter emotional story. Students whisper about friends flagged by detectors, even when they insist their work is original. Teachers, pressured to protect academic integrity, start trusting algorithms more than conversations. This tension reveals a deeper question: how can we use ai in schools without turning every assignment into a potential crime scene?

Why ai detectors make students nervous

At first glance, ai detectors seem like a simple solution. If ai can write, then another ai can identify that writing. However, these systems do not read with human understanding; they rely on patterns, probabilities, and linguistic signals. A student who writes in a clear, formal style might accidentally resemble an ai model. When that happens, the detector can flag their work as suspicious, even though no cheating occurred.

This uncertainty generates constant anxiety. Learners start second-guessing every sentence: Is this too polished? Too simple? Too organized? Instead of focusing on ideas, they worry about pleasing an invisible algorithm. The classroom shifts from a place of exploration into a place where every word feels like evidence in a trial. For teenagers already stressed about grades, this extra emotional burden cuts deep.

Trust also begins to erode. When a detector report contradicts a student’s explanation, some educators side with the software by default. After all, the ai tool looks objective, with neat percentages and risk scores. Yet those numbers hide guesswork. If a teacher has known a student’s voice for years, it feels unfair when a new system overrules that experience. Learners pick up on this shift and start to believe they are guilty until proven innocent.

How ai detectors actually work

Most ai detectors try to predict whether a text resembles machine-generated writing. They examine word choice, sentence patterns, rhythm, and repetition. Large language models often produce certain statistical fingerprints, such as overly balanced phrasing or moderate vocabulary without strong personal detail. Detectors search for these clues, then assign a probability score. Crucially, this is not proof. It is an educated guess built from previous examples.

From a technical perspective, this method has serious limits. If a human student writes cautiously, avoids slang, and follows templates taught in class, their work might resemble ai output. On the other hand, a student who heavily edits ai text or mixes it with personal stories might slip past detection. The line between “ai style” and “human style” blurs quickly, especially as both tools and students learn from each other.

False positives are more than technical errors; they have real emotional cost. Imagine staying up late perfecting an essay, only to be told a machine suspects you of cheating. Defending yourself becomes difficult, because you cannot prove a negative. You can share drafts, notes, and outlines, yet the initial damage to trust already occurred. This is why relying blindly on ai detectors feels dangerous to many educators, including me.

The human cost of false accusations

When schools lean heavily on ai detectors, they risk turning honest students into collateral damage. A single mistaken accusation can shatter confidence, especially for learners who already doubt their abilities. I believe academic integrity matters, but so does psychological safety. Technology ought to help students grow, not trap them in fear of invisible systems. Every time a detector is treated as infallible, we teach young people that machines know them better than they know themselves. Education should move in the opposite direction: ai as a partner, teachers as wise interpreters, and students as trusted creators learning to use powerful tools with courage, honesty, and reflection.